|

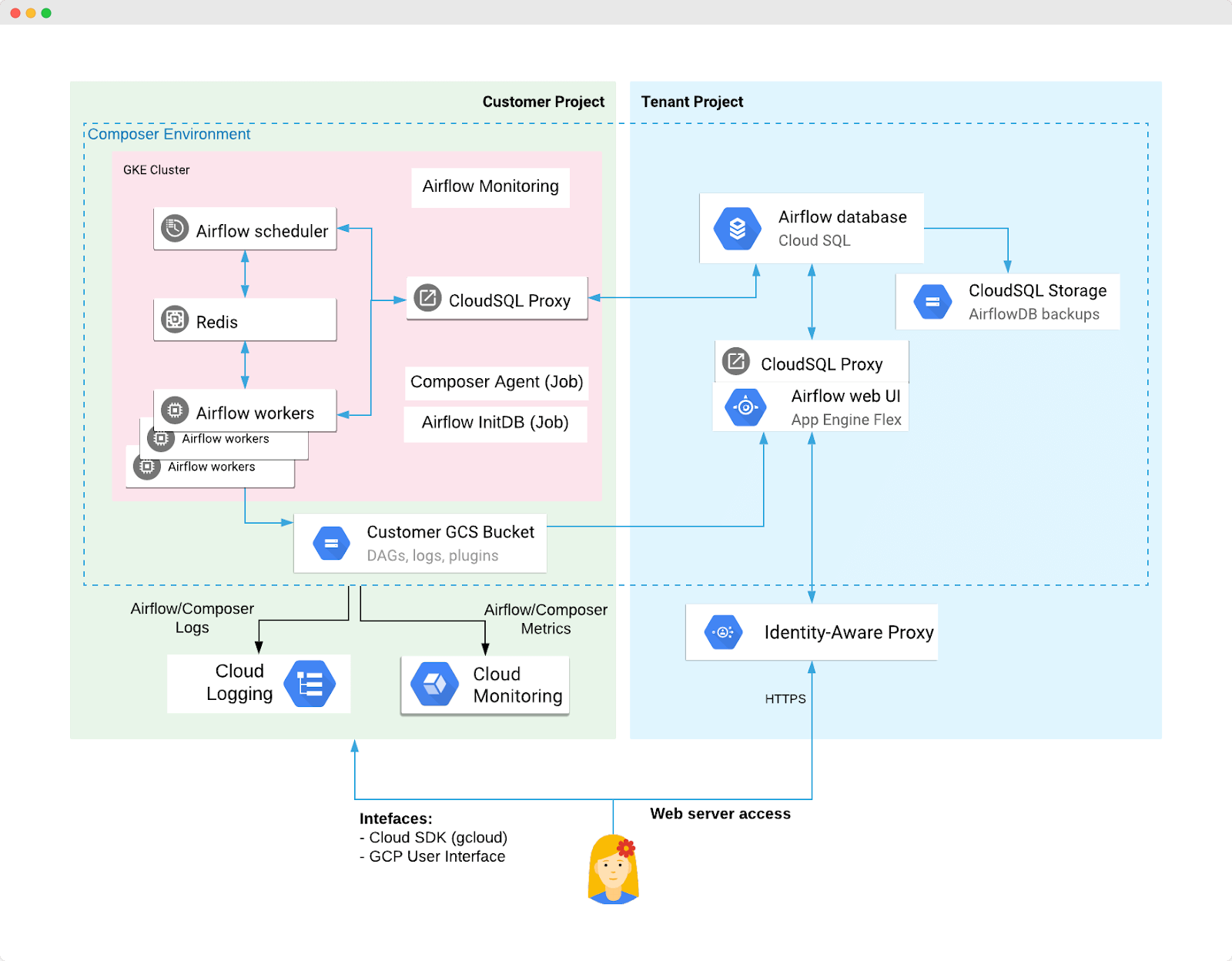

Had managed projects and programs in Enterprise Resource Planning and Business Intelligence implementations in the range of 3000 man days with revenue about 6mil US$ per year. Prior to this, had worked with Cap Gemini (formerly iGate Global Solution), GE to name a few. His last engagement was with Oracle India (initially with consulting and then with Oracle university) , which was for more than 12 years. Having over 25 + years of IT industry experience (Product development, Consulting & Training). The python DAG programs used in demonstration source file (9 Python files) are available for download toward further practice by students. The course is structured in such a way that it has presentation to discuss the concepts initially and then provides with hands on demonstration to make the understanding better. This course is designed with beginner in mind, that is first time users of cloud composer / Apache airflow. One-click deployment yields instant access to a rich library of connectors and multiple graphical representations of your workflow in action, increasing pipeline reliability by making troubleshooting easy. With Apache Airflow hosted on cloud ('Google' Cloud composer) and hence,this will assist learner to focus on Apache Airflow product functionality and thereby learn quickly, without any hassles of having Apache Airflow installed locally on a machine.Ĭloud Composer pipelines are configured as directed acyclic graphs (DAGs) using Python, making it easy for users of any experience level to author and schedule a workflow. Built on the popular Apache Airflow open source project and operated using the Python programming language, Cloud Composer is free from lock-in and easy to use. When your environment is up and running, the Google Cloud UI is clean and hassle-free: it just links to the DAG folder and to your Airflow webserver, which is where you’ll be spending most of your time.Apache Airflow is an open-source platform to programmatically author, schedule and monitor workflows.Ĭloud Composer is a fully managed workflow orchestration service that empowers you to author, schedule, and monitor pipelines that span across clouds and on-premises data centers. You could also do deployment programmatically by using Google Cloud’s gcloud. Of course, drag-and-drop is not the only option. Within seconds the DAG appears in the Airflow UI. This means you could literally drag-and-drop the contents of your DAG folder to deploy new DAGs. Your DAGs folder sits in a dedicated bucket in Google Cloud Storage.

You can also easily list your required Python libraries from the Python Package Index (PyPI), set environment variables, and so on.ĭeployment is simple. If you have a Google Cloud account, it’s really just a few clicks away (plus ~20 minutes of waiting for your environment to boot).

This is by no means an exhaustive evaluation - it’s simply my first impression of Cloud Composer. So, below is a very brief write-up of the experience testing out Cloud Composer. The nice thing about hosted solutions is that you as a Data Engineer or Data Scientist don’t have to spend that much time on DevOps - something you might not be very good at (at least I’m not!). As I had been looking at hosted solutions for Airflow, I decided to take Cloud Composer for a spin this week.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed